The Car Has Become a Computer You Live In

For most of automotive history, the dashboard was a concession. A necessary cluster of dials, switches, and gauges bolted onto a machine whose primary purpose was locomotion. The interface was an afterthought. The car was the product.

That logic has collapsed.

In 2026, the vehicle interior is the product. The drivetrain is infrastructure. What differentiates one platform from another is no longer torque or chassis geometry, it is the quality, coherence, and intelligence of the interaction layer. Automotive UX has crossed a threshold: from decoration to architecture.

The Anticipatory Shift

The foundational change is not about screens getting bigger or voices getting smarter. It is about the relationship between driver and system inverting. The old model was reactive, you searched the menu, the car responded. The new model is anticipatory, the system reads context and surfaces what you need before you ask.

BMW iX3 dashboard with integrated AI

BMW's 2026 iX3 operationalizes this with its Alexa+-powered Intelligent Personal Assistant, the first production vehicle to integrate a large language model as the primary interaction layer. The system doesn't wait for a command. It holds context across a conversation, links multiple requests in a single utterance, and adapts its responses based on learned behavior. This is not voice control. This is a co-pilot with working memory. The interface is no longer a panel you operate, it is a relationship you maintain.

The implication is structural. Generative UI means the interface is no longer designed once and shipped. It is procedurally assembled at runtime, shaped by who you are, where you are, and what the system infers you need. Static information architecture, the sitemap, the menu tree, the button hierarchy, becomes obsolete as a design deliverable.

Glass as Primary Real Estate

Parallel to the intelligence shift is a spatial one. The windshield is no longer just a safety barrier between occupant and road. It is the dominant display surface.

HARMAN's Ready Vision Qvue

HARMAN's Ready Vision Qvue extends a data layer across the full width of the glass. LG's automotive division has deployed transparent OLED windshield technology that overlays navigation, hazard alerts, and contextual environmental data onto the driver's actual field of vision, not adjacent to it. The cognitive load reduction is not incidental. It is the design argument. When information is spatially registered to the world outside the car, the brain stops translating. Lane arrows pin to lanes. Hazard markers float above the actual hazard. The interface becomes continuous with reality rather than a parallel representation of it.

This is augmented reality maturing past the novelty phase and into human factors engineering. The design challenge shifts from screen layout to depth composition, managing what information appears at what focal distance, in what sequence, at what level of opacity. It is film editing applied to space.

The Vehicle as Living Platform

The Software-Defined Vehicle reframes ownership entirely. Rivian's 2026.03 OTA update is the clearest current demonstration: Sport Mode expansion, Unreal Engine 5.5 visual rendering upgrades, Apple Watch integration, all pushed wirelessly to vehicles already in driveways. The car improves after purchase. The experience evolves on a cadence closer to a mobile operating system than a manufactured product.

Rivian’s 2026.03 OTA

This has direct consequences for how value is created and captured. Mercedes-Benz's MBUX fourth-generation platform integrates AI layers from both Microsoft and Google, treating the cockpit as a software environment with multiple concurrent vendors. Instrument cluster, infotainment, ambient systems, and driver assistance now operate as a single unified architecture. The UX is no longer partitioned by component. It is orchestrated across the whole cabin.

Mercedes-Benz's MBUX fourth-generation platform

Zero-UI and the Sentient Cabin

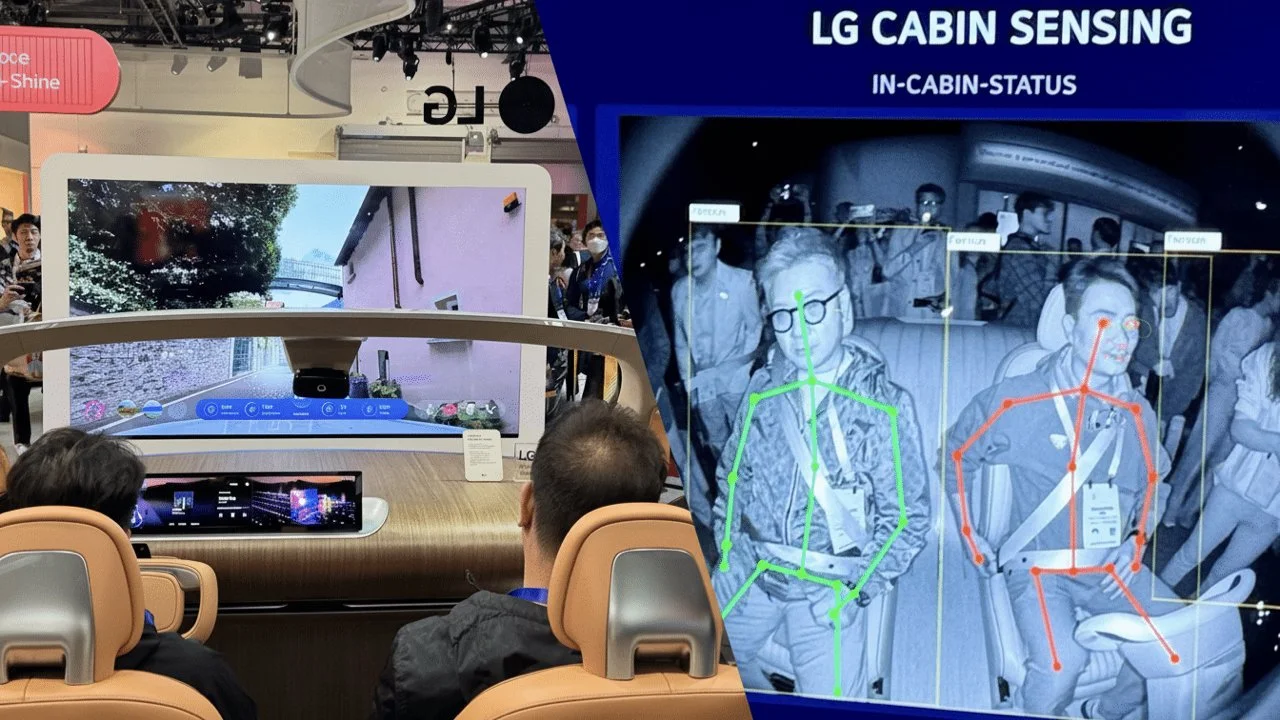

The industry is also moving to reduce deliberate interaction entirely. LG's CES 2026 demonstration cabin uses gaze tracking, gesture recognition, and real-time passenger state analysis to drive responses without explicit commands, passengers don't tap or speak, they simply look and the system infers intent. Smart Eye's driver monitoring platform, now embedded in over two million vehicles, has added alcohol intoxication detection and fatigue inference, feeding data directly into cabin adaptation systems. The car reads the driver. The interface becomes invisible.

LG's CES 2026 demonstration cabin

This is Zero-UI in practice: not fewer controls, but controls that disappear because the system has already acted.

The Interior as Third Space

The flat-floor EV platform has made possible something the internal combustion chassis never could: the interior as inhabitable space rather than operational cockpit. Kia's EV9, built on E-GMP, configures its cabin as a lounge environment, swiveling second-row seats, a floating panoramic dashboard that minimizes physical controls, and spatial proportions designed around comfort across all rows, not just the driver's position. The car is no longer designed for operation. It is designed for occupation.

The Designer as Systems Architect

What these five convergences share is a common demand: interaction and UX designers are no longer peripheral contributors to automotive programs. They are central.

The disciplines required to execute on this shift, conversational system design, spatial UI for AR environments, multimodal interaction modeling, biometric feedback loops, OTA experience strategy, do not live in mechanical engineering departments. They live in HCI, IXD, and service design. As automotive platforms become software-defined, the talent logic follows. Every new capability described here requires a designer who understands systems at the level of behavior, not just aesthetics.

The global driver monitoring market is projected to double by 2030. AR-HUD adoption is growing at nearly 19% CAGR. The SDV transition is accelerating across every major OEM. Each of these curves generates demand for practitioners who can design across modalities, across time (the OTA update cycle), and across the full human sensory bandwidth, not just the touchscreen rectangle.

The car has become too complex and too intimate to design without them.